The Winter School on Speech and Audio Processing (WiSSAP) is an annual school, organized in India since 2006. It provides a forum for researchers to enhance their expertise by exposing them to areas at the forefront of speech and audio signal processing.

The past 14 WiSSAPs have covered different aspects of speech and audio processing – perception/ recognition/ coding/ enhancement/ synthesis/ spatialization/ production/ hearing-aids etc.

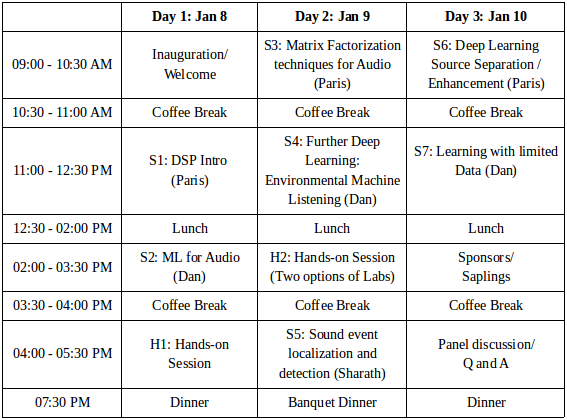

The theme for WiSSAP 2020 is Machine Listening: making sense of sound.

The event will host invited talks and tutorials by eminent researchers in the field of

machine perception, soundscape analysis and deep learning.

Humans have an amazing ability to make sense of their surroundings using sound. For example, we routinely deal with situations like the ringing of the telephone in the office, the honking of a horn while driving and the sound made by the contents of a shaken box. Machine listening deals with creating algorithms which can perform similar tasks, and more. With varied applications like self-driving cars, intelligent assistive devices and enhanced human-computer interaction, machine listening brings together various domains like audio signal processing and modelling, artificial intelligence and cognitive science.

Paris Smaragdis is faculty at the Computer Science and the Electrical and Computer Engineering departments of the University of Illinois at Urbana-Champaign, as well as a senior research scientist at Adobe Research. He completed his masters, PhD, and postdoctoral studies at MIT, performing research on computational audition. His research is focused on machine learning approaches to solving various audio signal processing problems. In 2006 he was selected by MIT’s Technology Review as one of the year’s top young technology innovators for his work on machine listening, in 2015 he was elevated to an IEEE Fellow for contributions in audio source separation and audio processing, and he served as an IEEE SPS Distinguished Lecturer in 2016-2017. He is currently the chair of IEEE's Audio and Acoustic Signal Processing Technical Committee, and a member-at-large of the IEEE Signal Processing Society Board of Governors.

He has been the chair of the ICA/LCA steering committee, the chair of the IEEE Machine Learning for Signal Processing Technical Committee, a member of the IEEE Audio and Acoustic Signal Processing Technical Committee, an Associate Editor for the IEEE Signal Processing Letters, and a Senior Area Editor for the IEEE Signal Processing Transactions. He holds 37 US patents and many others internationally. More here.

Dan Stowell is a research fellow applying machine learning to sound. He develops new techniques in structured "machine listening", using both machine learning and signal processing, to analyse soundscapes with multiple birds. He also worked on voice, music, birdsong and environmental soundscapes.

He is an EPSRC research fellow based at Queen Mary University of London.

He is also a Fellow of the Alan Turing Institute. More here.

Sharath Adavanne is a doctoral researcher (graduating 2020) at the audio research group at Tampere University, Finland, as well as a research scientist at ZAPR Media Labs. In his doctoral work, he developed techniques for computational auditory scene analysis using signal processing and deep learning methods. Currently, at ZAPR, he is working in the domain of machine listening, speech recognition, and synthesis. Previously, he has also worked in the industry solving problems related to music information retrieval, audio fingerprinting, and general audio content analysis. More here.

Indian Institute of Technology Mandi

Mandi district

Himachal Pradesh

Pin: 175005

INDIA

Faculty and industry participants will be accommodated in the C V Raman Guest House at IIT Mandi.

The Guest House rooms have already been blocked for WiSSAP. You do not need to contact the Guest House

specifically for reserving rooms.

Student participants would be accommodated in the student hostels (hostel room allotment list for male and female student participants).

Registration charges do not include accommodation.

We are accepting only online registration with the below fee structure. The early registration deadline is over.

| Category | ISCA/IEEE Members | Otherwise |

|---|---|---|

| Student | INR 4500 | INR 5500 |

| Academic Staff | INR 6500 | INR 7500 |

| Industry | INR 8500 | INR 9500 |

Make online fee payment to the below PNB bank account, and fill the payment transaction ID and your details at: click here.

| Bank Account Details | Bank Address |

|---|---|

| Bank Account No.: 7315000100034369

Bank Account Name: IIT Mandi SRIC Extension Activities |

Punjab National Bank, IIT Kamand, Mandi, HP-175005

IFSC Code: PUNB0731500 |

To know more details in relation to the theme, participation, and sponsorship, feel free to email us at: padman@iitmandi.ac.in and padmanrajan@gmail.com.

We're happy to announce the formation of IndSCA: Indian Speech Communication Association.

The association has been registered and is actively implementing several measures for

boosting the research activity in the country in all areas related to speech/audio/language

processing. The office-bearers and executive committee members of IndSCA are distinguished faculty in the area

belonging to premier institutes across India.

IndSCA announces, for the first time, the institution of Doctoral Dissertation Awards

and Masters Project Awards at the national level. Every year, nominations will be sought

from eligible candidates for the awards. The shortlisted candidates will have to present

their work at the following WiSSAP (Winter School in Speech and Audio Processing).

The award ceremony will also take place at WiSSAP.

IndSCA Awards 2020 will be the first in the series and will consider Ph.D. theses and

Masters projects "defended" during the calendar years of 2018 and 2019.

Who can nominate?

The advisor of the student or the head of the department/institute where the work was carried out. Self-nominations will not be accepted.

Who can be nominated?

Any student working in any academic institute (government/private) in India whose degrees

are recognized. The student should have defended the thesis/project before December 20th

of the year in which the nominations are sought. Mere submission of the thesis

is not sufficient. The thesis/project must have been defended successfully as well. Since this is the first year IndSCA is calling

for nominations after it has been constituted, we're considering all theses/projects defended during 2018 and 2019.

What does the Masters category comprise?

Masters category includes M.E./M.Tech., M.Tech.(Research), and M.Sc.(Eng.) by Research.

The due date for receiving the nominations is December 20, 2019. The nominations may be sent to Dr. Sriram Ganapathy (sriram.iisc@gmail.com).

Please find the nomination form here.

We have been privileged to have a exciting learning experiences from our past WiSSAPs. Our past invited speakers have included: Lori L. Holt, Barbara Shinn-Cunningham, Rainer Martin, T. V. Ananthapadmanabha, Ivan Tashev, Christof Faller, Emanuel Habets, Marc Swerts, Mark Hasegawa-Johnson, Yi Xu, Kalika Bali, Shrikanth (Shri) S. Narayanan, Shihab Shamma, Hynek Hermansky, Li Deng, Tanja Schultz, Tara Sainath Julia Hirschberg, Simon King, Tomoki Toda, Martin Cooke, De Liang Wang, Daniel P. W. Ellis, Bhiksha Raj, Gautham J.Mysore, V Ramasubramanian, Birger Kollmeier, B. Yegnanarayana, Walter Kellermann, Xavier Serra, Malcolm Slaney, John Makhoul, Jiri Navratil, Andreas Stolcke, Frédéric Bimbot, Alan W. Black, Jan P. H. van Santen, Richard Sproat, Kuldip Paliwal, Bastiaan Kleijn, K. Brandenburg, Steve Levinson, and T. Svendsen. Use the links below to see the details of past WiSSAPs.

For any suggestions feel free to contact our program committee at x@y.

| Programme Committee | |

|---|---|

| T V Sreenivas

Indian Institute of Science, Bangalore x = tvsree y = ece.iisc.ernet.in |

Hema A. Murthy

Indian Institute of Technology, Madras x = hema y = cse.iitm.ac.in |

| Chandra Sekhar Seelamantula

Indian Institute of Science, Bangalore x = chandrasekhar y = iisc.ac.in |

Sriram Ganapathy

Indian Institute of Science, Bangalore x = sriramg y = iisc.ac.in |

| Preeti Rao

Indian Institute of Technology, Bombay x = prao y = ee.iitb.ac.in |

Padmanabhan Rajan

Indian Institute of Technology, Mandi x = padman y = iitmandi.ac.in |

| Dileep A D

Indian Institute of Technology, Mandi x = addileep y = iitmandi.ac.in |

Anil Sao

Indian Institute of Technology, Mandi x = anil y = iitmandi.ac.in |

| Arnav Bhavsar

Indian Institute of Technology, Mandi x = arnav y = iitmandi.ac.in |

| Tweets by wissap2020 | Tweet #wissap2020 |

|---|